I. Introduction

Algorithmia (a marketplace for algorithms) [1], reports that though there is a significant rise in the AI budgets, 87% of data science projects never make it into production. Only 22% of the companies using machine learning have successfully deployed a model to the production.

The ML model deployment involves working around several components such as data, engineering, infrastructure, monitoring, automation etc.

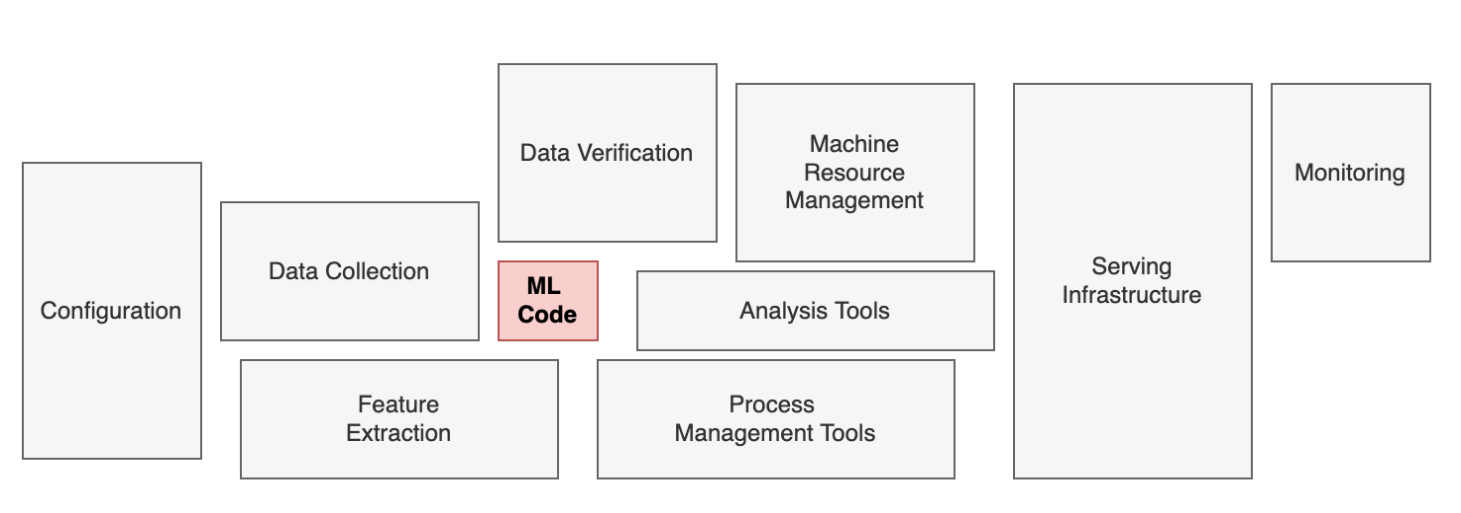

Real-world ML System

Let's look at the bigger picture of real-world ML Systems. The ML code is a very small component [2], where coding and training ML models account for a small fraction of the effort required to successfully track, deploy, monitor, and make decisions with it at a business scale.

So, what is making it challenging to deploy ML models to production? Is it the ML model itself or something else?

Before answering these questions, let’s understand the fundamental difference between traditional software and ML. Unlike traditional software, ML is not just code; it’s code + data. So, the independent evolution of code and data makes it hard to track, manage, monitor, and automate in ML.

In traditional software development, the automation practice of continuous integration to continuous deployment (DevOps) has evolved many folds in the last two decades. And made it easy to ship software to production within a few minutes. But now, we are in a pulpit where every business wants to associate with Artificial Intelligence / Machine Learning into their products. So, this new exigency of building AI/ML systems redefines the principles of the SDLC catalysed to a new engineering discipline named MLOps.

II. What are MLOPs?

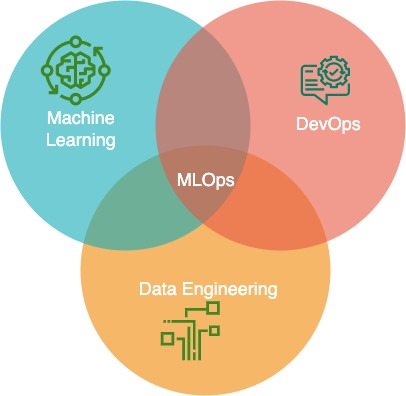

MLOps refers to a set of practices and tools that combine Machine Learning, DevOps, and Data Engineering, which aim to deploy and maintain ML systems in production reliably and efficiently.

MLOps is a multidisciplinary field

MLOps is a multidisciplinary field that exists at the intersection of Machine Learning, DevOps, and Data Engineering.

III. Stages in MLOps Process

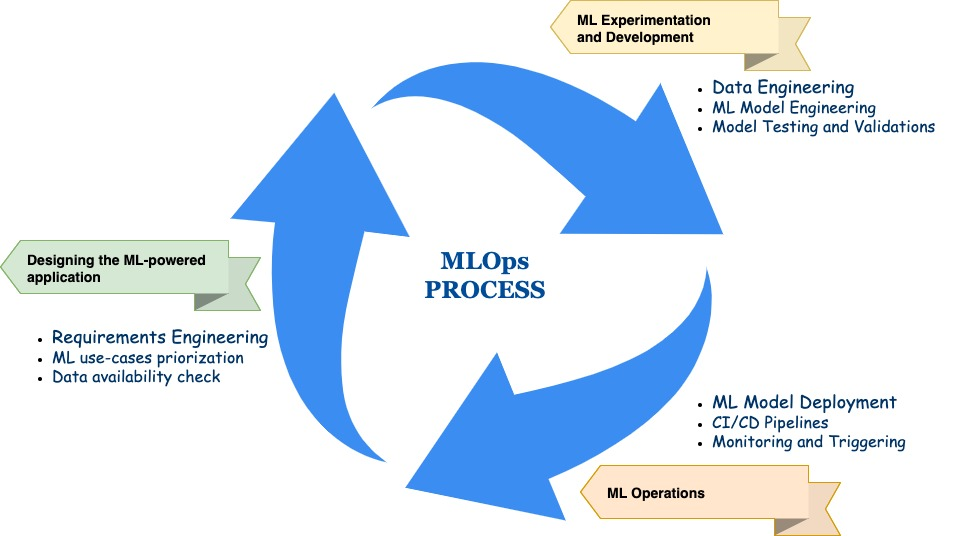

The complete MLOps process [3] includes three broad phases:

-

1. Designing the ML-powered Application

-

2. ML Experimentation and Development

-

3. ML Operations

MLOps Process

To better understand the MLOps process (mentioned above), let's take a sample use case - Weather Forecasting and see what activities we carry out in each phase.

Phase 1: Designing the ML-powered application

This phase mainly focuses on understanding the business context and gathering requirements and conducting the data availability, quality and completeness study to assess the feasibility of building an ML-based forecasting model. If the data is inadequate and of poor quality, we prioritise using a rule-based solution than building an ML model.

Phase 2: ML Experimentation and Development

In this phase, we consolidate, aggregate and transform the several features required to build a weather forecasting model. Try out various algorithms and architectures to find an accurate and stable model. Perform in time and out of time validations to ensure the model is robust enough to deploy into production.

Phase 3: ML Operations

We deploy the stable model found in the earlier phase and create a CI/CD pipeline for automating the training and deployment and enable the model monitoring to alert on model failures, decay, and drifts. Recently it made the headlines that due to the COVID-19 pandemic, weather forecasting models became less accurate [4] as the valuable data disappeared with the reduction of commercial flights. The sudden shift in the data/feature distribution cause models to fail miserably. So, it's imperative to monitor the production models continuously for detecting failures and decay.

All three phases discussed above are interconnected and can influence each other.

How does MLOps play a central role in implementing an effective AI strategy?

MLOps enable rapid and innovative ML lifecycle management (for implementing potent model governance). It makes it easier for data science teams to collaborate with engineering teams and expedite model development. Moreover, the provision to track, monitor, validate, and manage systems for machine learning models accelerates the deployment process.

MLOps support the optimisation and reusability of the resources and it saves significant time through the automated workflows. It empowers the teams to monitor (detect model decay) and build automated model training and deployment pipelines to accommodate the target and data drifts.

IV. Conclusion

MLOps plays an integral role in implementing an effective AI strategy and helps the organisations cut down the development deployment time (through automation) and build accurate and trusted models, thereby realising the incremental business impact.

In a following article, we will discuss how we are implementing MLOps at scale at TVS Motor Company.

References

[2] https://papers.neurips.cc/paper/5656-hidden-technical-debt-in-machine-learning-systems.pdf

[3] https://ml-ops.org/content/mlops-principles

Nice opportunity